Upload a File to S3 Bucket Using Aws Cli

When working with Amazon S3 (Unproblematic Storage Service), yous're probably using the S3 spider web console to download, copy, or upload files to S3 buckets. Using the panel is perfectly fine, that'southward what it was designed for, to brainstorm with.

Especially for admins who are used to more mouse-click than keyboard commands, the web console is probably the easiest. Withal, admins will somewhen meet the need to perform bulk file operations with Amazon S3, like an unattended file upload. The GUI is not the best tool for that.

For such automation requirements with Amazon Web Services, including Amazon S3, the AWS CLI tool provides admins with command-line options for managing Amazon S3 buckets and objects.

In this commodity, yous will learn how to use the AWS CLI command-line tool to upload, copy, download, and synchronize files with Amazon S3. Yous will also learn the basics of providing admission to your S3 bucket and configure that access contour to piece of work with the AWS CLI tool.

Prerequisites

Since this a how-to article, there will be examples and demonstrations in the succeeding sections. For you to follow along successfully, you will need to see several requirements.

- An AWS business relationship. If you lot don't have an existing AWS subscription, you tin can sign upwardly for an AWS Free Tier.

- An AWS S3 bucket. Yous tin use an existing saucepan if you'd prefer. Still, it is recommended to create an empty saucepan instead. Please refer to Creating a bucket.

- A Windows 10 computer with at least Windows PowerShell v.1. In this article, PowerShell 7.0.2 volition be used.

- The AWS CLI version 2 tool must exist installed on your computer.

- Local folders and files that you will upload or synchronize with Amazon S3

Preparing Your AWS S3 Admission

Suppose that y'all already have the requirements in identify. You'd remember you tin can already get and start operating AWS CLI with your S3 saucepan. I mean, wouldn't it be nice if it were that simple?

For those of you who are just beginning to piece of work with Amazon S3 or AWS in general, this section aims to help you fix access to S3 and configure an AWS CLI profile.

The total documentation for creating an IAM user in AWS tin be establish in this link below. Creating an IAM User in Your AWS Account

Creating an IAM User with S3 Admission Permission

When accessing AWS using the CLI, you will need to create one or more IAM users with enough access to the resource you intend to piece of work with. In this department, you will create an IAM user with access to Amazon S3.

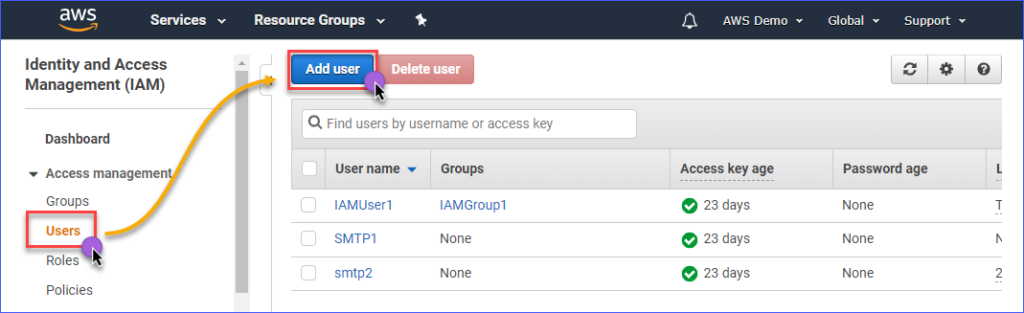

To create an IAM user with admission to Amazon S3, you first demand to login to your AWS IAM console. Nether the Access management group, click on Users. Side by side, click on Add user.

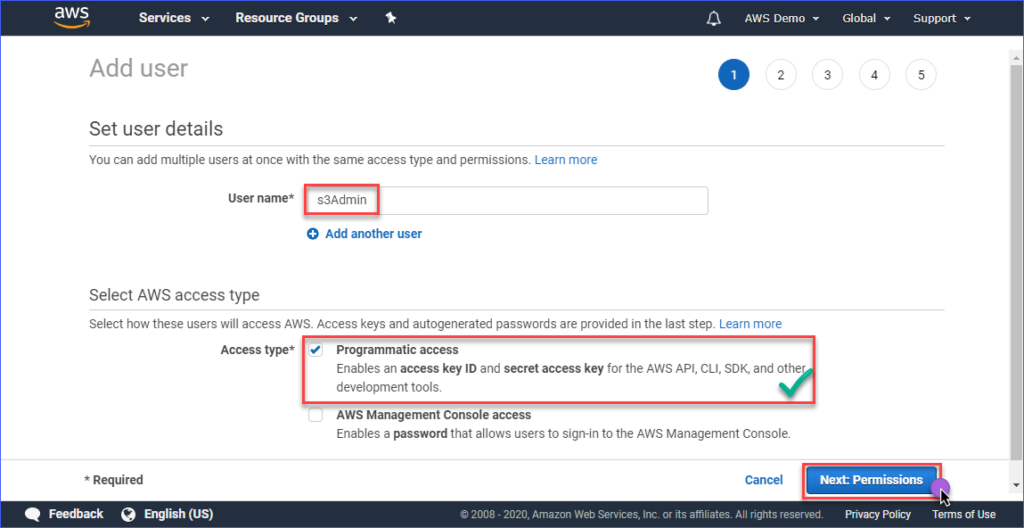

Type in the IAM user'due south name you are creating inside the User name* box such as s3Admin. In the Access blazon* choice, put a check on Programmatic admission. Then, click the Adjacent: Permissions push button.

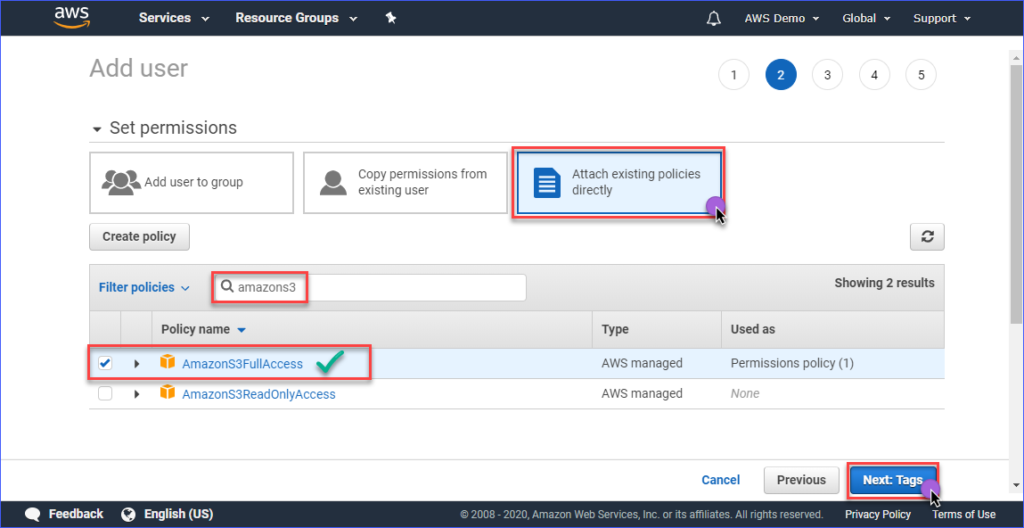

Next, click on Attach existing policies directly. Then, search for the AmazonS3FullAccess policy name and put a check on information technology. When washed, click on Next: Tags.

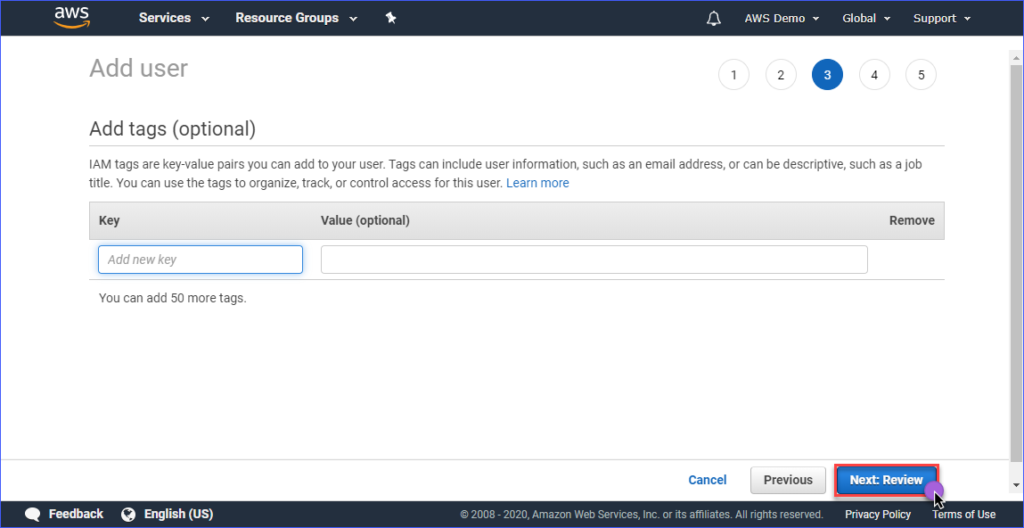

Creating tags is optional in the Add together tags page, and you tin can just skip this and click on the Side by side: Review button.

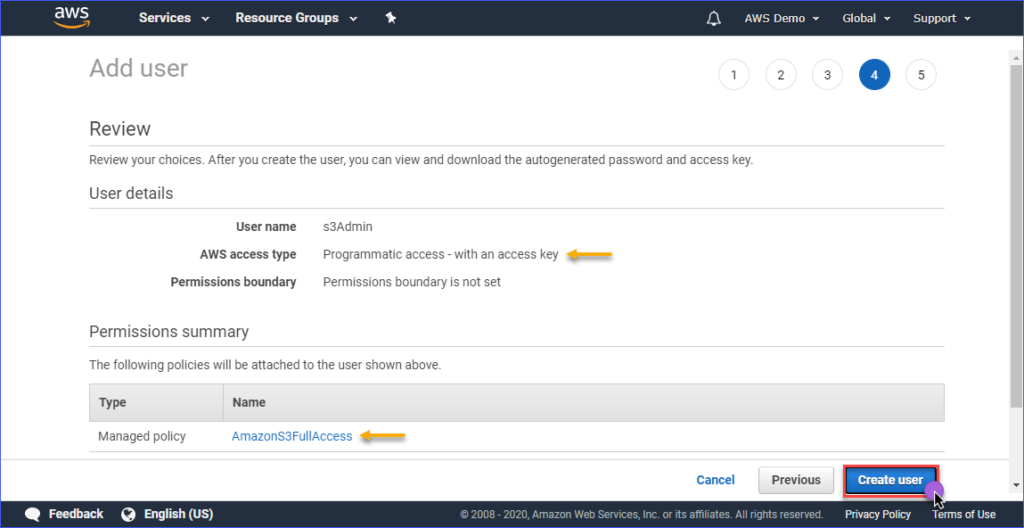

In the Review page, you are presented with a summary of the new business relationship being created. Click Create user.

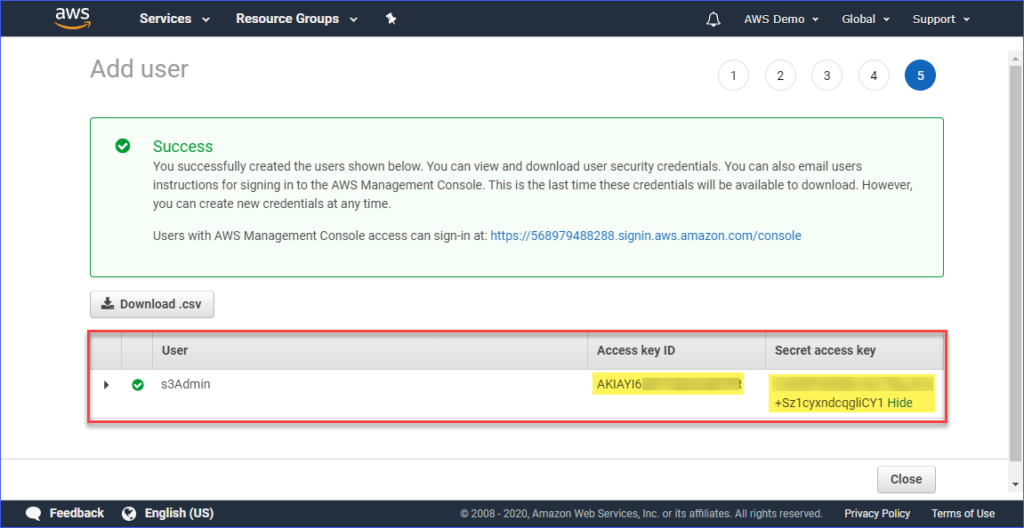

Finally, once the user is created, you must copy the Access key ID and the Secret admission primal values and save them for later user. Note that this is the only time that you tin see these values.

Setting Up an AWS Profile On Your Computer

Now that you've created the IAM user with the appropriate access to Amazon S3, the side by side step is to prepare up the AWS CLI contour on your computer.

This department assumes that you already installed the AWS CLI version 2 tool every bit required. For the contour creation, you lot will need the following information:

- The Access key ID of the IAM user.

- The Hugger-mugger access key associated with the IAM user.

- The Default region proper noun is corresponding to the location of your AWS S3 bucket. You can check out the list of endpoints using this link. In this commodity, the AWS S3 saucepan is located in the Asia Pacific (Sydney) region, and the respective endpoint is ap-southeast-2.

- The default output format. Utilize JSON for this.

To create the profile, open PowerShell, and type the command beneath and follow the prompts.

Enter the Access key ID, Secret access fundamental, Default region proper name, and default output name. Refer to the demonstration below.

Testing AWS CLI Access

Afterward configuring the AWS CLI profile, you tin confirm that the contour is working by running this control below in PowerShell.

The control to a higher place should list the Amazon S3 buckets that you lot have in your account. The sit-in below shows the command in action. The result shows that list of available S3 buckets indicates that the profile configuration was successful.

To learn about the AWS CLI commands specific to Amazon S3, y'all tin can visit the AWS CLI Command Reference S3 page.

Managing Files in S3

With AWS CLI, typical file direction operations tin be done like upload files to S3, download files from S3, delete objects in S3, and copy S3 objects to another S3 location. It's all merely a matter of knowing the right command, syntax, parameters, and options.

In the post-obit sections, the surround used is consists of the following.

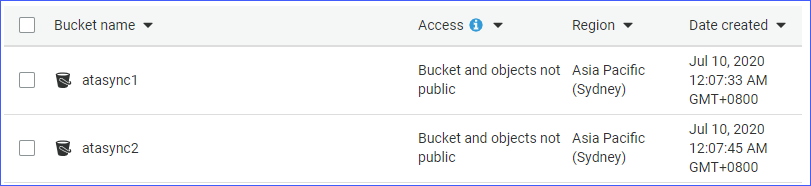

- Two S3 buckets, namely atasync1and atasync2. The screenshot beneath shows the existing S3 buckets in the Amazon S3 console.

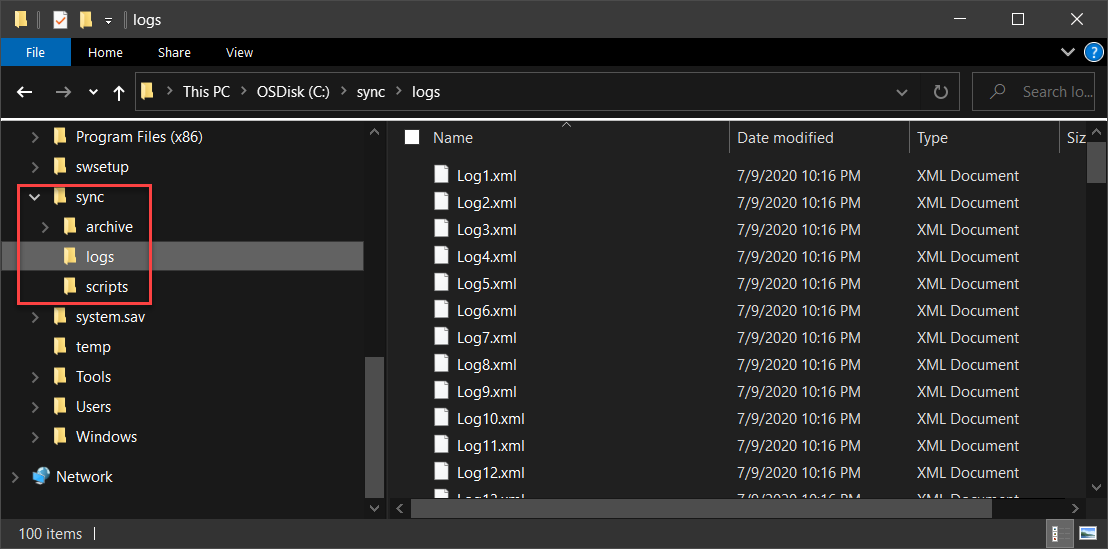

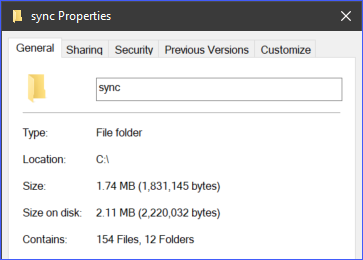

- Local directory and files located under c:\sync.

Uploading Private Files to S3

When you upload files to S3, you tin upload 1 file at a time, or past uploading multiple files and folders recursively. Depending on your requirements, y'all may choose one over the other that yous deem appropriate.

To upload a file to S3, yous'll demand to provide two arguments (source and destination) to the aws s3 cp command.

For example, to upload the file c:\sync\logs\log1.xml to the root of the atasync1 bucket, you can use the command beneath.

aws s3 cp c:\sync\logs\log1.xml s3://atasync1/ Annotation: S3 bucket names are always prefixed with S3:// when used with AWS CLI

Run the above control in PowerShell, but change the source and destination that fits your surround first. The output should look like to the demonstration below.

The demo to a higher place shows that the file named c:\sync\logs\log1.xml was uploaded without errors to the S3 destination s3://atasync1/.

Apply the command beneath to list the objects at the root of the S3 bucket.

Running the command in a higher place in PowerShell would effect in a similar output, as shown in the demo below. As you can see in the output below, the file log1.xml is present in the root of the S3 location.

Uploading Multiple Files and Folders to S3 Recursively

The previous section showed y'all how to re-create a single file to an S3 location. What if you need to upload multiple files from a folder and sub-folders? Surely yous wouldn't want to run the same control multiple times for different filenames, right?

The aws s3 cp command has an option to process files and folders recursively, and this is the --recursive option.

As an example, the directory c:\sync contains 166 objects (files and sub-folders).

Using the --recursive choice, all the contents of the c:\sync binder volition be uploaded to S3 while also retaining the binder structure. To examination, utilize the example code below, but make sure to change the source and destination appropriate to your surround.

You'll notice from the code below, the source is c:\sync, and the destination is s3://atasync1/sync. The /sync key that follows the S3 saucepan name indicates to AWS CLI to upload the files in the /sync folder in S3. If the /sync folder does non exist in S3, it will be automatically created.

aws s3 cp c:\sync s3://atasync1/sync --recursive The code to a higher place will consequence in the output, every bit shown in the demonstration below.

Uploading Multiple Files and Folders to S3 Selectively

In some cases, uploading ALL types of files is not the best option. Like, when you only need to upload files with specific file extensions (e.chiliad., *.ps1). Another two options available to the cp command is the --include and --exclude.

While using the command in the previous department includes all files in the recursive upload, the command below will include only the files that match *.ps1 file extension and exclude every other file from the upload.

aws s3 cp c:\sync s3://atasync1/sync --recursive --exclude * --include *.ps1 The sit-in beneath shows how the code above works when executed.

Another instance is if y'all desire to include multiple unlike file extensions, you will need to specify the --include selection multiple times.

The example command below will include just the *.csv and *.png files to the re-create command.

aws s3 cp c:\sync s3://atasync1/sync --recursive --exclude * --include *.csv --include *.png Running the code to a higher place in PowerShell would present you with a like consequence, as shown beneath.

Downloading Objects from S3

Based on the examples you've learned in this department, you tin also perform the re-create operations in reverse. Pregnant, you can download objects from the S3 bucket location to the local car.

Copying from S3 to local would crave yous to switch the positions of the source and the destination. The source being the S3 location, and the destination is the local path, similar the one shown beneath.

aws s3 cp s3://atasync1/sync c:\sync Annotation that the same options used when uploading files to S3 are too applicable when downloading objects from S3 to local. For case, downloading all objects using the command below with the --recursive option.

aws s3 cp s3://atasync1/sync c:\sync --recursive Copying Objects Between S3 Locations

Apart from uploading and downloading files and folders, using AWS CLI, y'all can likewise re-create or movement files between two S3 saucepan locations.

Yous'll discover the command below using i S3 location every bit the source, and another S3 location every bit the destination.

aws s3 cp s3://atasync1/Log1.xml s3://atasync2/ The sit-in beneath shows yous the source file being copied to some other S3 location using the command in a higher place.

Synchronizing Files and Folders with S3

You've learned how to upload, download, and copy files in S3 using the AWS CLI commands so far. In this department, you'll larn about one more file operation command available in AWS CLI for S3, which is the sync command. The sync control only processes the updated, new, and deleted files.

At that place are some cases where y'all need to continue the contents of an S3 bucket updated and synchronized with a local directory on a server. For example, you may have a requirement to go on transaction logs on a server synchronized to S3 at an interval.

Using the command below, *.XML log files located under the c:\sync binder on the local server will be synced to the S3 location at s3://atasync1.

aws s3 sync C:\sync\ s3://atasync1/ --exclude * --include *.xml The demonstration beneath shows that afterwards running the command to a higher place in PowerShell, all *.XML files were uploaded to the S3 destination s3://atasync1/.

Synchronizing New and Updated Files with S3

In this next case, it is assumed that the contents of the log file Log1.xml were modified. The sync control should option up that modification and upload the changes done on the local file to S3, as shown in the demo beneath.

The command to employ is still the same as the previous example.

As you can see from the output above, since merely the file Log1.xml was inverse locally, information technology was also the merely file synchronized to S3.

Synchronizing Deletions with S3

By default, the sync command does non process deletions. Any file deleted from the source location is non removed at the destination. Well, not unless you use the --delete option.

In this next example, the file named Log5.xml has been deleted from the source. The command to synchronize the files will be appended with the --delete option, as shown in the code beneath.

aws s3 sync C:\sync\ s3://atasync1/ --exclude * --include *.xml --delete When you lot run the command above in PowerShell, the deleted file named Log5.xml should also exist deleted at the destination S3 location. The sample outcome is shown below.

Summary

Amazon S3 is an excellent resources for storing files in the deject. With the use of the AWS CLI tool, the mode you employ Amazon S3 is further expanded and opens the opportunity to automate your processes.

In this commodity, you've learned how to use the AWS CLI tool to upload, download, and synchronize files and folders between local locations and S3 buckets. You lot've also learned that S3 buckets' contents can too be copied or moved to other S3 locations, too.

There can be many more use-case scenarios for using the AWS CLI tool to automate file management with Amazon S3. You lot can even try to combine information technology with PowerShell scripting and build your own tools or modules that are reusable. It is upward to you to find those opportunities and show off your skills.

Further Reading

- What Is the AWS Command Line Interface?

- What is Amazon S3?

- How To Sync Local Files And Folders To AWS S3 With The AWS CLI

lemonsdoormemas1978.blogspot.com

Source: https://adamtheautomator.com/upload-file-to-s3/

0 Response to "Upload a File to S3 Bucket Using Aws Cli"

Postar um comentário